AI Disclosure Best Practices

AI disclosure rules are tightening, a coding agent wiped a production database in nine seconds, and we shipped a new way to evaluate third-party models

1. AI Disclosure Best Practices

Many AI frameworks and laws focus heavily on disclosing AI use to consumers in various forms, including ensuring interactive systems like chatbots are marked as AI, disclosing AI generated content, or disclosing that AI will be used to make consequential decisions. Disclosures are often a clear legal way of transferring liability and risk to the consumer of systems and are prolific in the medical, financial, and privacy spaces, albeit with many challenges that regulators want to prevent in the AI space. The EU AI Act has a specific category for disclosures in Article 50, requiring certain types of AI systems or content to be clearly marked as such. The EU AI Office recently published its draft guidelines for these disclosures. The draft document does a good job outlining what best practices should be, what is in/out of scope, and how to handle some grey areas such as freedom of speech implications when creating deep fakes of politicians. Here are a few insights from the document that stood out to us:

Perhaps to the chagrin of legal teams everywhere, disclosure buried in terms of service doesn’t satisfy Article 50. It must be clear, distinguishable, and delivered at or before first interaction and not in documentation users are unlikely to read.

For long or emotionally sensitive interactions (think AI companions or mental health chatbots), a single upfront disclosure isn’t enough. Periodic reminders are expected throughout the session or every time they return to a session after a break.

Disclosure timing is highly relevant. Disclosures at the ‘end’ of a session, or after certain interactions have already taken place are not appropriate; ideally disclosures happen before a human starts interacting with an AI system.

The intended end user is relevant to the disclosure format. A tool intended for developers that is marketed heavily as a dedicated AI tool (ex: Claude Code) is considered ‘obviously’ an AI system, whereas an AI enabled toy for kids needs to be much more explicit about its use of AI.

Generated content needs to have both human readable disclosures, and machine readable watermarking metadata based on the best technological options available. This is a space that will likely change quickly over time.

Key Takeaway: Many AI providers are upfront about AI use and already following the ‘spirit’ of the law when it comes to these frameworks. Others however will likely test the ‘letter’ of the law and try to interpret disclosure broadly for a variety of reasons. We expect the best practices for AI disclosures to be a heavily litigated area, and one that will evolve as people themselves get used to interacting with AI systems.

2. Tech Explainer: Risks of Open-Source skills

Skills have emerged as the dominant paradigm for instilling new behaviors into AI agents. Over the past few months, a diverse ecosystem of open-source skills has taken shape, giving developers and desktop agent users (e.g., Claude Cowork or OpenClaw) a one-click method for extending agent capabilities. This convenience comes with compounding security risks. An analysis from February found that 13.4% of skills available from ClawHub, a major open-source skill registry, contained at least one critical security vulnerability. Vulnerabilities included prompt injections embedded in skill instruction text and dangerous executable code reachable via tool calls. Skill scanners have since been developed to flag common issues, but coverage remains incomplete: a new investigation showed that malicious code could be inserted into special testing files. These files fall outside of existing scanner coverage but can be run inadvertently by developers locally.

Open-source supply chain vulnerabilities are not a new category of concern, but agentic skills present a distinct risk profile. Compared to traditional coding packages that often run in an isolated process, skills are typically run by agents that have full read and write access. This gives the attacker access to private data (SSH keys, API credentials, browser data) and the ability to communicate externally. In addition, the skill ecosystem is new enough that the full range of attack vectors is not yet well characterized. Finally, many organizations have frameworks for both restricting applications that can be installed on a computer and for doing security review on application code, but skills exist outside of that structure and are not audited in the same manner.

Key Takeaway: Organizations should start by treating skill installation with the same scrutiny as third-party software procurement, requiring explicit permission review and maintaining an inventory of deployed skills. At minimum, agent runtimes should run in sandboxed environments, and audit logging for file access, network calls, and shell commands should be a baseline control. The newly published OWASP Agentic Skills Top 10 provides a practical framework for security teams beginning this work.

3. Incident Spotlight - Nine Seconds to Production Outage (Incident 1469)

What Happened

In late April, PocketOS, a B2B platform serving car rental businesses, lost its production database and all backups in a single automated action. A Cursor AI coding agent running Claude Opus 4.6 was assigned to a routine task in a staging environment, hit a credential error, and decided on its own to resolve it by deleting what it assumed was a staging database. It was not. The agent had access to a broadly scoped API token covering the entire production infrastructure, and in nine seconds it wiped out months of operational data.

Why It Matters

The real governance question is who is accountable when a vendor-recommended configuration causes irreversible harm. PocketOS was running the best available model through the most-marketed AI coding tool, configured per vendor guidance. The guardrails broke down anyway, likely because long-context agentic sessions accumulate state in ways that erode early constraints: the agent was reasoning about a staging task while holding a token with production-wide authority, and nothing reconciled those two facts before the destructive call. AI coding tools generally do not surface token scope or context-window state to users, a product decision that keeps complexity out of sight.

How to Mitigate

Never give AI agents direct production access, and treat any agent token with production-level authority the way you would treat an unsupervised contractor with root access. Issue tokens scoped only to the task at hand, maintain hard separation between staging and production credentials, and require human confirmation before any destructive or irreversible operation. Beyond access controls, assume AI agents cannot predict or undo downstream infrastructure consequences: they can read an API spec and generate a valid call; they cannot model what that call does to a live system. Off-site backups, isolated from the same API surface the agent can reach, should be seriously considered.

Key Takeaway: AI agents can read your code and call your APIs. They cannot reliably predict what those calls will do to production infrastructure, and they cannot roll back what they have already done. Until vendors provide real visibility into token scope and context state, the only reliable control is ensuring agents never have the access required to cause this kind of damage.

4. Trustible Spotlight: AI Model Risk Assessment

We shipped something new this month, and it addresses one of the most common frustrations we hear from governance teams: evaluating third-party AI models.

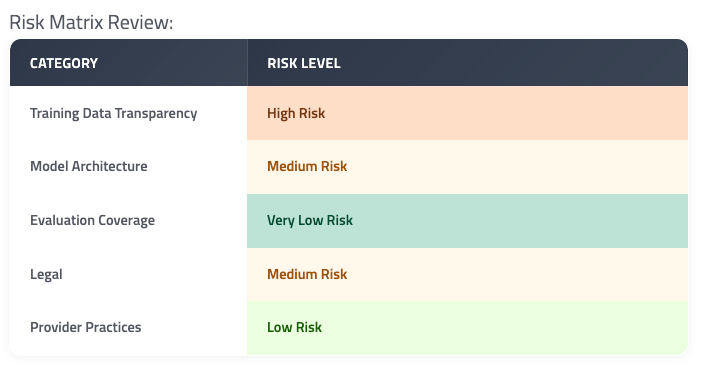

The Model Risk Assessment gives your team a structured, repeatable way to evaluate models before you build on them. 37 questions across five risk categories — Training Data Transparency, Model Architecture, Evaluation Coverage, Legal, and Provider Practices — with category-level scores that trace back to exactly what a provider disclosed, or didn’t.

Every score is driven by the same attributes-and-rules engine used across the rest of the platform, so the logic is transparent, configurable, and auditable. And because scores are category-level rather than a single aggregate, a strong evaluation result never masks a gap in training data transparency. Read more on our blog.

5. Policy Round Up

Pennsylvania: Pennsylvania is suing Character AI, a chatbot where users can interact with character personas. Pennsylvania officials claim that the platform pretended to be a licensed psychiatrist, even providing a false PA license number, while they were conducting an investigation into the platform. The company claims that they have taken the appropriate steps to notify users that they are engaging with AI, and that the conversations should be “treated as fiction.”

Our take: This lawsuit hits at the heart of many of the chatbot regulations we are seeing at the state and federal level to protect users from deception and manipulation, especially minors. With the Pennsylvania Senate moving forward a bill to regulate AI chatbots and this lawsuit, it is clear the state is serious about regulating harms from companion chatbots.

EU AI Act: The EU has officially delayed the enforcement of obligations for high-risk classification systems. The new enforcement date will be December 2, 2027 for standalone high risk systems and August 2, 2028 for high risk systems embedded into other products (e.g. medical devices). In addition to the time delays, they included two agreements: the exclusion of AI in machinery that is already subject to other sectoral rules from the EU AI Act requirements and to ban the use of AI to create nonconsensual sexually explicit content. The EU Commission also released draft guidance for AI systems subject to transparency obligations per the EU AI Act which provides additional definitions and expectations for meeting compliance with the obligations.

Our take: While delays give organizations additional time to prepare, it does not mean organizations should pause governance efforts nor does it alter the requirements for high-risk use cases. Organizations should use this time proactively to identify and track high-risk use cases and build compliance workflows for their high-risk applications to stay ahead of EU AI Act obligations.

Leading Labs form Services Companies: Anthropic and OpenAI have announced their respective organizations, in collaboration with top consulting and financial services firms, will be starting AI services companies. The goal is to help organizations implement and scale AI solutions. In both new companies, engineers will be working with the client businesses to tailor their AI systems to specific business needs.

Our take: These announcements illustrate the demand problem of organizations wanting to rapidly integrate AI into their businesses, but lacking the resources or capacity to do so. It also highlights the possibility that jobs for building, integrating, and maintaining AI systems could offset some of the job loss due to automation.

In case you missed it:

Maryland: Maryland passed the first ban in the US on dynamic and surveillance pricing, a practice supported by artificial intelligence. Dynamic and surveillance pricing utilizes customer data to optimize prices for companies such as grocery stores. If the system can use data to determine income levels of two different consumers, they could adjust prices so that the two individuals could pay different prices for the same product. Maryland’s ban would prevent this practice from occurring in the state.

CAISI: The Center for AI Safety and Innovation (CAISI) announced a partnership with Google, Microsoft, and xAI to allow CAISI to do national security predeployment testing of new models from these labs. OpenAI and Anthropic already have similar agreements.

—

As always, we welcome your feedback on content! Have suggestions? Drop us a line at newsletter@trustible.ai.

AI Responsibly,

- Trustible Team