Why AI Monitoring is Hard

Plus, Evaluating AI Evaluations, AI Personality Theft, and Policy Updates

(Source: Claude)

1. Why AI Monitoring is Hard

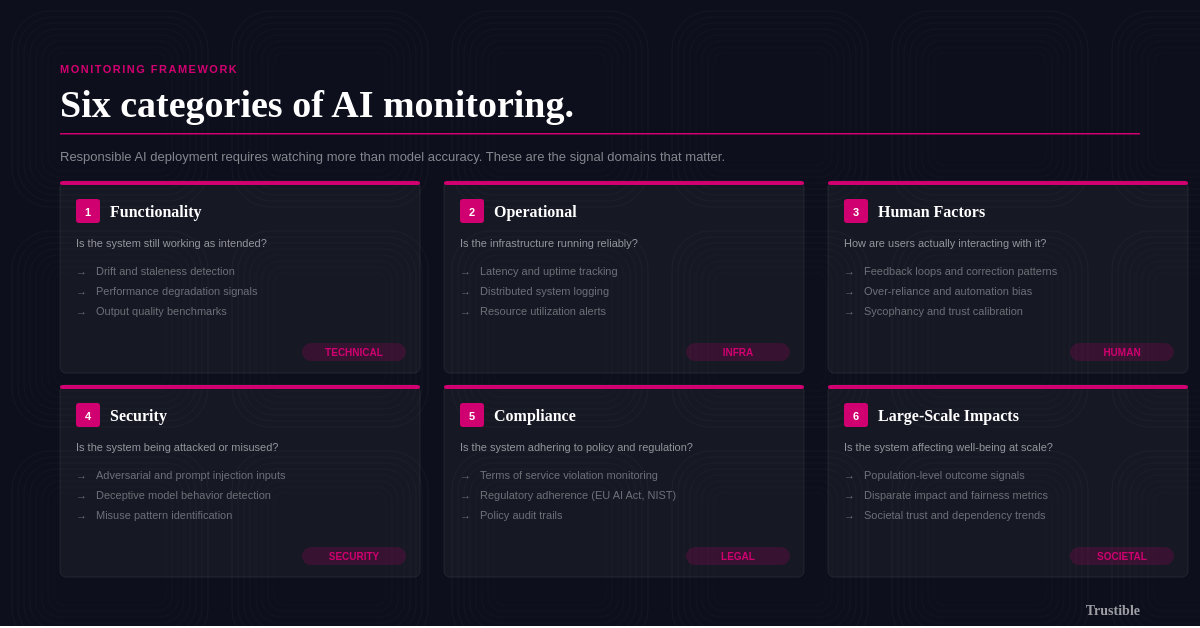

When enterprises say they want to monitor their AI systems, they rarely mean the same thing. For a security team, monitoring means watching for adversarial attacks and prompt injection. For a compliance officer, it means tracking regulations and court cases that could impact their AI systems. For a product team, it means making sure the model hasn’t quietly gotten worse at the thing it was deployed to do. A new report from NIST formalizes this fragmentation, organizing post-deployment monitoring into six distinct categories that rarely get discussed together:

Functionality — Is the system still working as intended? (Detecting drift, staleness, performance degradation)

Operational — Is the infrastructure running reliably? (Latency, uptime, logging across distributed systems)

Human Factors — How are users actually interacting with the system? (Feedback loops, over-reliance, sycophancy)

Security — Is the system being attacked or misused? (Adversarial inputs, deceptive model behavior, misuse detection)

Compliance — Is the system adhering to relevant regulations and policies? (Terms of service violations, regulatory adherence)

Large-Scale Impacts — Is the system promoting or degrading human well-being at a population level?

The report, drawn from three workshops with over 250 practitioners and a review of 87 papers, finds that most organizations are only monitoring one or two of these categories, and the field lacks agreed-upon methods, shared terminology, and basic consensus on who in the AI supply chain is even responsible for each. The incentive problems compound this: monitoring is expensive, publicly reporting incidents carries legal and competitive risk, and AI outputs are non-deterministic enough that establishing a reliable performance baseline is itself an open research problem. For deployers specifically, the report is a formal acknowledgment from NIST that the governance burden is shifting downstream, and the tools and standards needed to manage it don’t yet exist. The best practices for collecting and analyzing this kind of data are highly immature, and quickly shifting alongside the AI technology stack.

Trustible’s own AI Monitoring whitepaper, and blog post separates the ideas of ‘internal’ monitoring which focuses on analysing highly technical data about the relevant AI system, and ‘external’ monitoring which aims to collect information from outside the deployers boundaries. Internal monitoring largely maps to NIST’s Functionality, Operational, and Security factors while, ‘external’ monitoring maps to the Human Factors, Compliance, and Large Scale Impacts elements.

Key Takeaway: AI Monitoring is all of these things, and there’s therefore no ‘silver bullet’ solution for all forms of AI monitoring. The NIST framework is a useful forcing function for governance teams to audit which monitoring categories they’ve actually addressed and which they’ve implicitly assumed someone else owns.

2. Tech Explainer: The Trouble with Evaluations

As AI systems grow more advanced, evaluating them gets more complicated; at the same time, we are seeing a decreased consistency in how evaluations are reported for general-purpose AI models. Unlike traditional machine learning, where models are built for a specific purpose (e.g. predicting if an email is spam) and can be evaluated against that goal, GPAI models are adapted for downstream tasks and assessed benchmarks (e.g. math problem solving) that are often inconsistently applied. Two providers can report scores on the same benchmark while using different prompting strategies or answer aggregation techniques, making direct comparisons unreliable. A benchmark can also misrepresent what it claims to measure: interview-style coding questions won’t tell you much about how a model performs in a production system.

The core problem is a lack of standards for what to report. Trustible’s work with the EvalEval coalition produced a universal schema for documenting evaluation results, giving developers and deployers a consistent reporting standard and a way to compare why scores on the “same benchmark” diverge. Related efforts like BenchRisk, EvalFactSheets, and the Construct Validity Checklist give developers and deployers structured tools to report on and assess benchmark quality before trusting the results. Adoption is still limited, but these are now available reference points for model developers, deployers, users and policy-makers. The challenge will get harder as evaluations shift from models to agents, where the harness (i.e. tools and integrations surrounding a model) shape results as much as the model itself.

Key Takeaway: AI Literacy requires skeptically reviewing performance headlines touted by AI providers. While organizations should construct internal benchmarks when picking the best models for their systems, many of the same challenges persist and the frameworks shared can help.

3. AI Incident Spotlight - AI Style, Personality, and Identity Theft (Incident 1407)

What Happened: In late 2025, Grammarly launched an “Expert Review” tool where subscribers could upload writing and receive real-time editing feedback presented as coming from named journalists, authors, and academics, including novelist Stephen King and tech journalist Kara Swisher. Grammarly never sought or obtained consent from any of the named experts whose identities it used to sell the feature. In response, journalist Julia Angwin filed a lawsuit against Grammarly’s parent company Superhuman, and the feature was pulled shortly after.

Why It Matters: While the visual and auditory ‘likeness’ of a person has been discussed in depth in the context of AI generated images and video, this incident highlights an additional question of whether a person’s style and personality should also be included. In her lawsuit, and related NYT Op-Ed, Angwin argues: “My ability to earn a living rests on my ability to craft a phrase, to synthesize an idea, to make readers care about people and places they can only access through words on a page,”. For content creators, journalists, and subject matter experts whose reputations are themselves a professional asset, the commercial use of an AI simulation of their expertise is an existential threat to their way of life, and capable of causing massive reputational harm if the system is wrong. In this case, Grammarly is exposed from a liability angle, but the broader question about what constitutes ‘likeness’, and what rights someone should have to it are still unresolved. Existing privacy laws that may cover a person’s visual likeness under the context of ‘biometric’ data don’t clearly apply to a person’s ‘content style’.

How to Mitigate: Before shipping any feature that associates named individuals with AI-generated output, confirm you have explicit written consent or a licensing agreement. Some music and film artists have started to sell these rights to AI platforms. While AI laws targeting deep fakes are still being debated or implemented, existing tort law can still apply if there are reasonable claims of loss of income resulting from the AI. Right-of-publicity review should be a mandatory gate in the product development lifecycle for any feature involving real people’s names, styles, or personas, and that review needs to happen before engineering begins, not at launch.

Key Takeaway: There are many open questions about what constitutes a person’s likeness, and further debates about what kinds of rights a person should have around their likeness, and how to balance these issues with freedom of speech. Broader questions around likeness after death, or how to enforce these things on a global scale are unlikely to be resolved any time soon.

4. Policy Roundup

Anthropic vs. the Pentagon

The Trump administration officially labeled Anthropic a “supply-chain risk” and banned government agencies and military contractors from using Claude after contract negotiations broke down over two conditions Anthropic refused to drop. While they publicly threatened an aggressive stance on the issue, the formal notice was more limited in scope and only prohibits use of Claude to directly support DoD contracts. Despite the narrower designation, Anthropic filed two suits against the DOD, calling the actions “unprecedented and unlawful”. Major tech companies, including Microsoft, have voiced strong support for Anthropic.

Our Take: Anthropic may have ‘lost’ the battle, but in doing so, may be winning the war. At least PR wise, as consumer Claude downloads spiked as a result of the discussion. Organizations may need to ensure that they never get too deeply locked in with a single model provider in case LLM selections get further politicized over time. Organizations may also need to invest in processes for safely switching model providers.

EU AI Act Amendments Near the Finish Line

EU Parliament lawmakers reached a political deal within the Parliament that adds an explicit ban on AI-generated non-consensual intimate images and eases compliance rules for AI embedded in regulated products like medical devices. Separately, the EU Council approved its negotiating position on the Digital Omnibus, which would push back high-risk system deadlines by up to 16 months pending the availability of compliance standards.

Our Take: Now that the 3 main bodies of the EU policy making apparatus (Commission, Council, Parliament) have solidified their positions, political negotiations will begin between them. Given the common positions, enforcement of the high risk AI requirements of the EU AI Act are unlikely in 2026. Enforcement in late 2027, or early 2028 is now likely.

US Supreme Court Closes the Door on AI Copyright

The US Supreme Court declined to hear an appeal on Thaler v. Perlmutter, leaving intact the rule that works generated entirely by AI are ineligible for copyright protection. For now, Human authorship will continue to be a requirement for receiving IP protections in the US.

Our Take: Requiring human involvement to receive IP protections is a good balancing act in the AI ecosystem as it protects content creators in a fair way. However we expect more aggressive lobbying by tech firms on this issue over the next few years.

—

As always, we welcome your feedback on content! Have suggestions? Drop us a line at newsletter@trustible.ai.

AI Responsibly,

- Trustible Team